The regression coefficients that lead to the smallest overall model error.To find the best-fit line for each independent variable, multiple linear regression calculates three things: how much variation there is in our estimate of ) = the regression coefficient of the last independent variable.… = do the same for however many independent variables you are testing.the effect that increasing the value of the independent variable has on the predicted y value) = the regression coefficient ( ) of the first independent variable ( ) (a.k.a.= the y-intercept (value of y when all other parameters are set to 0).= the predicted value of the dependent variable.The formula for a multiple linear regression is: See editing example How to perform a multiple linear regression Multiple linear regression formula Linearity: the line of best fit through the data points is a straight line, rather than a curve or some sort of grouping factor. Normality: The data follows a normal distribution.

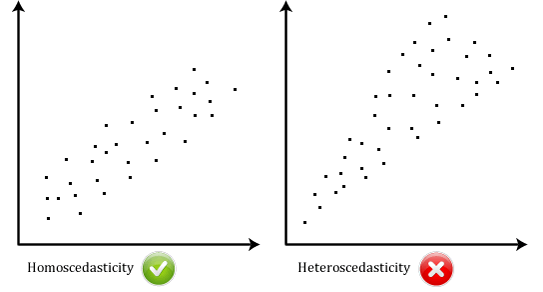

If two independent variables are too highly correlated (r2 > ~0.6), then only one of them should be used in the regression model. In multiple linear regression, it is possible that some of the independent variables are actually correlated with one another, so it is important to check these before developing the regression model. Independence of observations: the observations in the dataset were collected using statistically valid sampling methods, and there are no hidden relationships among variables. Homogeneity of variance (homoscedasticity): the size of the error in our prediction doesn’t change significantly across the values of the independent variable. Multiple linear regression makes all of the same assumptions as simple linear regression: Frequently asked questions about multiple linear regressionĪssumptions of multiple linear regression.How to perform a multiple linear regression.Assumptions of multiple linear regression.You survey 500 towns and gather data on the percentage of people in each town who smoke, the percentage of people in each town who bike to work, and the percentage of people in each town who have heart disease.īecause you have two independent variables and one dependent variable, and all your variables are quantitative, you can use multiple linear regression to analyze the relationship between them. Multiple linear regression exampleYou are a public health researcher interested in social factors that influence heart disease. the expected yield of a crop at certain levels of rainfall, temperature, and fertilizer addition). The value of the dependent variable at a certain value of the independent variables (e.g.how rainfall, temperature, and amount of fertilizer added affect crop growth). How strong the relationship is between two or more independent variables and one dependent variable (e.g.You can use multiple linear regression when you want to know:

Multiple linear regression is used to estimate the relationship between two or more independent variables and one dependent variable. Regression allows you to estimate how a dependent variable changes as the independent variable(s) change. Regression models are used to describe relationships between variables by fitting a line to the observed data. Try for free Multiple Linear Regression | A Quick Guide (Examples) It’s worth noting that if the Prob>F isn’t significant, you don’t have to provide the important predictors.Eliminate grammar errors and improve your writing with our free AI-powered grammar checker. Gender (beta=.23, p.001), age (beta=.10, p.05), and parental engagement (beta= -.10, p.05) are all significant predictors, according to the regression output. According to Cohen (1988), the average effect size in social science domains is medium. R2 or adjusted R2 also shows effect size, with bigger R2 values indicating larger effect sizes and thus more desirable results. R2 tends to increase as you add more variables to the model, therefore using modified R2 to ensure that the variables you include in the model are useful (i.e., not just junk) is a good idea. When you have a lot of independent variables in your model, adjusted R2 is preferable because it accounts for the amount of variables in the model.

The adjusted R-squared of.09 indicates that the independent variables in the model explain for 9% of the variance in the outcome variable. So, look at the likelihood (#1), then R-squared or Adjusted R-squared (#2), and, if the #1 is significant, individual p values of each predictor (#3). The whole regression model with all of these factors is statistically significant, as shown by the regression output above: F(6, 556)=10.16, p.001, Adjusted R-squared =.09. Multiple Regression Stata Interpretation of Multiple Regression Analysis

0 Comments

Leave a Reply. |

Details

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed